Layers of AI: From Classical Reasoning to Agentic Systems

January 17, 2026

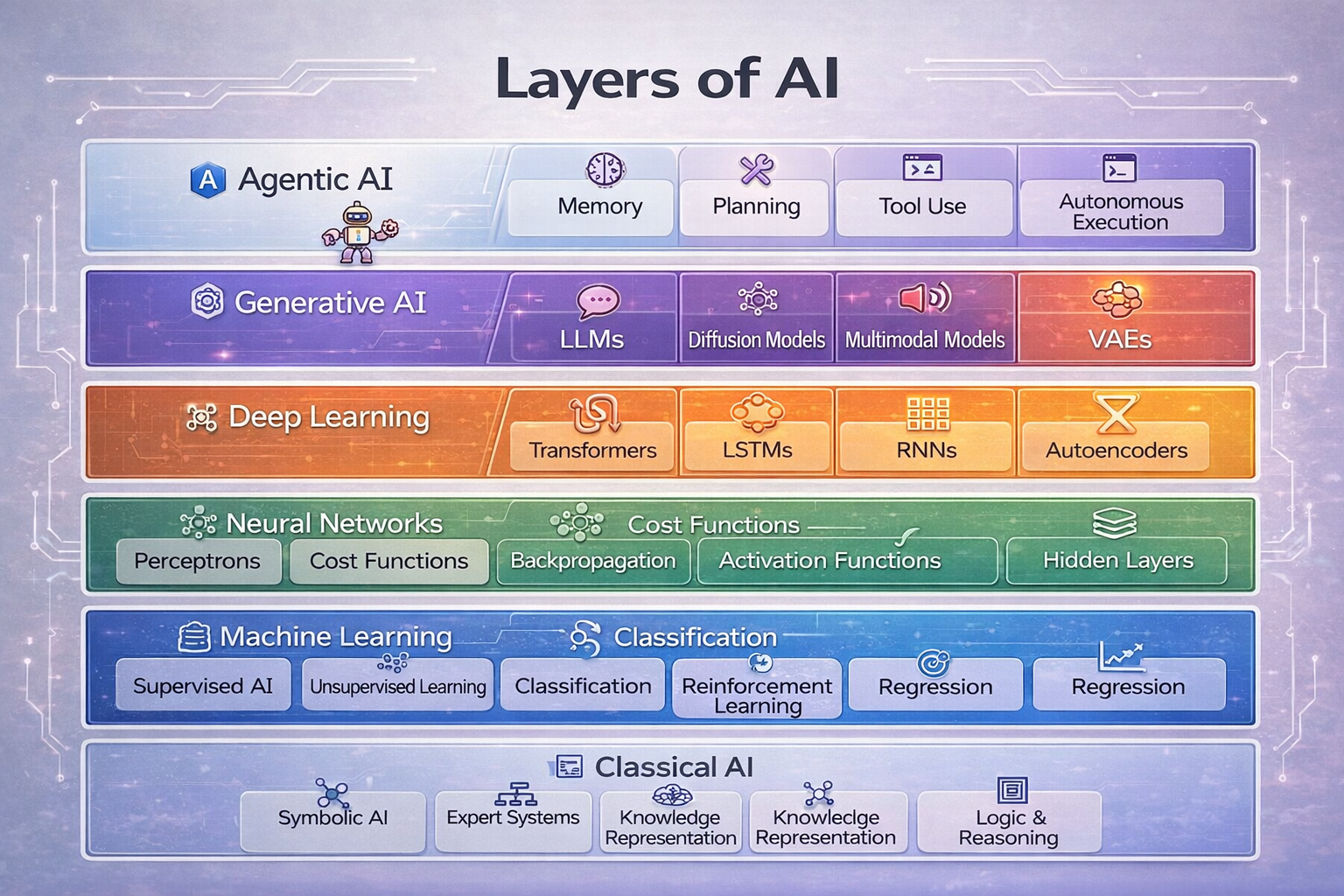

A practical, systems-level explanation of how modern AI builds upward from classical logic to agentic, autonomous systems and why each layer still matters.

Layers of AI: From Classical Reasoning to Agentic Systems

I’ve been having the same conversation over and over lately: someone says “AI,” and we’re clearly talking about two different things. One person means a rules engine. Another means a big language model. Another means an autonomous agent that can take actions. All of those are “AI,” but they live on different layers of the stack.

So I wanted to write this out in plain, weblog style and map the layers the way I actually think about them when I’m building systems. I keep finding that naming the layer up front saves me hours of confusion later.

If you’re new to the space, think of this as a guided walk through the floors of the building. If you’ve been in the trenches for a while, consider it a quick reset on where each part of the stack actually earns its keep.

Why I Like the Layered View

When debates about AI get messy, it’s usually because people are standing on different floors of the same building. They say the same word but point at totally different systems.

This framing helps me in real projects because it forces me to ask: which layer owns which behavior? If I can’t answer that, I don’t really understand the system I’m shipping.

- A rules engine is AI.

- A neural network is AI.

- A large language model is AI.

- An autonomous agent is AI.

They’re not the same thing, and each layer adds new capabilities and new ways to break. If you skip layers, you end up misunderstanding the system you’re trying to ship.

It also keeps expectations sane. If you’re expecting planning behavior from a generative model, you’re going to be disappointed. If you expect a rules engine to “adapt,” you’ll be waiting a long time.

Layer 1: Classical AI — Reasoning Before Learning

This is the “old school” layer: explicit knowledge, hand-built rules, and logic. It’s not trendy, but it’s everywhere.

When I’m working on production systems, this is often the part people forget to mention, even though it keeps the rest of the stack safe and predictable. It’s the layer where humans say, “no matter what the model outputs, you cannot do this.”

What lives here:

- Symbolic AI

- Expert systems

- Knowledge graphs

- Logic and rule-based reasoning

It’s explainable and deterministic. If something goes wrong, you can usually trace it back to a rule. The downside is that it’s brittle and doesn’t scale well when the world gets fuzzy.

I think of it like guardrails on a mountain road. You don’t steer with guardrails, but you’re grateful they’re there when the road gets tricky.

But it’s still how we handle:

- Compliance

- Policy enforcement

- Safety constraints

- Business rules

Even modern AI systems lean on classical AI for guardrails. If you’re building anything serious, this layer is still part of the conversation, even if nobody calls it “AI” anymore.

Layer 2: Machine Learning — Learning From Data

Machine learning flips the script: instead of writing rules, you train models to learn patterns.

This is the layer where teams start caring a lot about data quality and feedback loops. The behavior comes from the data, so the data becomes the product.

Key ideas:

- Supervised and unsupervised learning

- Classification and regression

- Reinforcement learning

This is where uncertainty shows up. Models generalize; they also misgeneralize. You trade explainability for adaptability, and that trade never fully goes away.

In practice, I find that ML is often used to fill in the messy middle: personalization, scoring, ranking, forecasting. It’s powerful, but it needs monitoring and humility.

Layer 3: Neural Networks — The Computational Substrate

Neural networks are the function approximators that make modern ML work at scale. They don’t “reason.” They optimize.

The important part is that they’re good at capturing non-linear patterns, which is why they became the backbone of modern AI. But they’re also opaque, and they make it hard to explain why a particular output happened.

Common pieces:

- Perceptrons

- Hidden layers

- Activation functions

- Backpropagation

- Cost functions

Every higher layer inherits their strengths and their opacity. They’re powerful, but they’re not transparent.

Whenever someone says “the model decided,” I’m usually thinking: the network optimized. That framing keeps expectations grounded.

Layer 4: Deep Learning — Representation at Scale

Deep learning is what happens when you stack neural networks deep enough to learn representations.

This is the layer that finally made perception feel practical. Before deep learning, vision and speech systems worked, but they were narrow and brittle. After deep learning, they got surprisingly robust.

Architectures you’ll recognize:

- Convolutional neural networks (CNNs)

- Recurrent neural networks (RNNs)

- LSTMs

- Transformers

- Autoencoders

This is how we get perception: vision, speech, and embeddings. It creates strong components, but it still doesn’t automatically equal “intelligence.”

I like to think of deep learning as the sensory system of the stack. It sees and hears really well, but it still needs structure and context from the layers above.

Layer 5: Generative AI — Creating, Not Just Predicting

Generative models changed how people experience AI. Instead of predicting labels, they generate text, images, audio, and code.

This is the layer that made AI feel conversational. It doesn’t just score or classify; it responds in natural language and can improvise across tasks.

Typical models:

- Large language models (LLMs)

- Diffusion models

- Multimodal models

- Variational autoencoders (VAEs)

They feel intelligent because they’re interactive and expressive. But they still don’t plan or act. They generate.

That distinction matters a lot in real systems. A model can draft a plan; it cannot execute it without the system around it.

Layer 6: Agentic AI — Acting With Intent

Agentic systems sit at the top of the stack. They combine generative models with memory, tools, and execution.

This is where you start to see workflows that feel like “work” is being done. The system can decide to call APIs, update state, or trigger other services, all driven by some loop that keeps it moving.

Capabilities you see here:

- Memory and state

- Planning

- Tool use

- Autonomous execution

- Feedback loops

Agents decide what to do, not just what to say. But they’re only as good as the layers underneath them. No classical rules? Unsafe. No ML? Brittle. No deep learning? Blind. No generative layer? Mute. Agents are not models—they’re systems.

That’s why I always push for explicit guardrails and clear ownership between layers. If the agent can take actions, you need to be even more intentional about where the brakes live.

The Takeaway: Layers Don’t Replace Each Other

A modern AI product usually includes all of these layers at once:

- Classical rules for compliance

- ML models for prediction

- Neural networks for approximation

- Deep learning for perception

- Generative models for interaction

- Agents for orchestration

If you pull one layer out, you degrade the whole system. Most real failures happen between layers, not within them.

That’s the uncomfortable part: the tricky bugs are usually in the glue. You can have a great model and still ship a lousy system because the handoffs are unclear.

Final Thought

AI didn’t get complicated overnight. It got layered. The question I keep asking myself is: which layer is actually responsible for the thing I’m shipping?

That’s the difference between “cool demo” and “reliable system.”

If you can name the layer, you can design for it. If you can’t, you’re probably betting your product on the wrong assumptions.